SAP’s API Policy v4/2026 vs Agentic AI: What Enterprise Customers Should Do Now

Right now, one of the hottest (and most frustrating) topics in SAP circles is the updated API policy narrative around third‑party generative AI and autonomous agents.

If you’re a customer, it’s easy to get pulled into vendor drama: who is blocking whom, who is trying to lock in which ecosystem, who is “protecting stability” vs. “killing innovation”.

But if you’re the person who actually has to build and run SAP integrations, the only question that matters is much more boring:

What should we do now so our AI plans don’t depend on brittle SAP interfaces or unclear policy interpretations?

In this post I’ll share how I read SAP’s API policy v4/2026 direction from a customer and architect perspective, what I would stop doing immediately, and what I would build instead if my goal is: “use SAP data for analytics and AI, but remain defensible to security, auditors, and SAP itself”.

What’s changing: agentic AI is now a first-class concern

There has always been a line between:

- published and supported SAP APIs, and

- internal / undocumented interfaces that people use anyway because they’re convenient.

What’s different in 2026 is that “agentic AI” shifts the risk profile dramatically. A traditional integration calls a few endpoints in a predictable sequence. An autonomous agent can:

- plan a sequence of API calls,

- try different paths when something fails,

- repeat queries at scale,

- and do all of that based on prompts that evolve over time.

From a platform owner’s point of view, that’s scary — whether you’re SAP, Microsoft, Salesforce, or anyone else. It increases load risk, stability risk, and makes “what exactly is consuming our APIs?” harder to control.

So the policy shift itself is not surprising. The impact on customers is.

The real customer risk: building your AI strategy on gray areas

Most customer frustration I’ve seen isn’t about “SAP wants published APIs”. That’s reasonable.

The frustration comes from uncertainty and gray areas:

- What exactly is allowed for AI use cases?

- What about partners and tools that rely on existing integration patterns?

- What about internal AI initiatives that don’t “train a model on SAP data” but do analytics or RAG?

If your architecture depends on undocumented endpoints, private tables, or “we’ve always done it this way”, you’re exposed. Not just to SAP policy — also to security findings and audit questions.

That’s why I think this moment is a forcing function for customers:

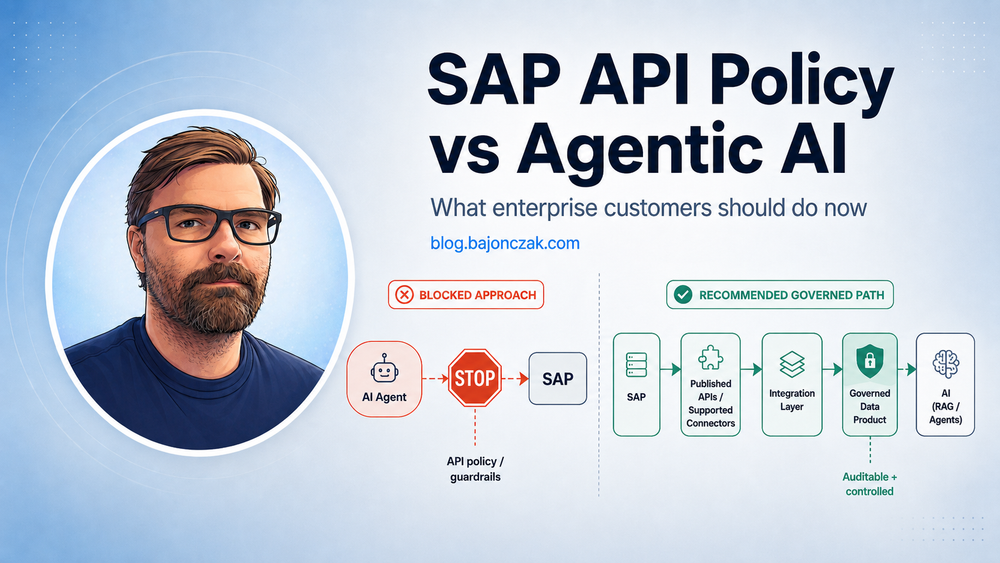

Move your SAP-to-AI story from “agent calls SAP directly” to “governed data products and well-defined interfaces”.

What I would stop doing immediately

Here are the patterns I would actively kill off (or at least freeze) if I was responsible for SAP + AI integrations right now.

1) “AI agent calls SAP APIs directly” without hard guardrails

If your plan is “let’s connect Copilot / a third-party agent / a generic LLM tool directly to SAP and see what happens”, you’re betting on a policy gray zone and a technical stability risk at the same time.

Even if it’s technically possible today, it’s rarely defensible long-term.

2) Undocumented APIs as strategic dependencies

If a connector, script, or partner product relies on undocumented endpoints, treat it like technical debt with a deadline. It might work for years — until it doesn’t, and then your AI roadmap gets blocked by a contract conversation.

3) Bulk extraction without clear purpose

“Let’s copy all SAP data into a lake and figure out AI later” is almost always a governance failure. If you can’t explain:

- which data you extract,

- why you need it,

- who owns it,

- who can access it,

then you are creating a bigger problem than any API policy could.

What I would build instead: the “governed data product” pattern

The most robust response I see is to treat SAP data like what it is: a system of record with strong boundaries.

You don’t connect random agents to your system of record. You build a controlled integration path and land curated data in a governed platform you own.

A generic reference architecture looks like this:

flowchart LR

SAP[SAP (system of record)] -->|Published APIs / supported connectors| INT[Integration Layer]

INT -->|Curated datasets| DP[Data Platform (Fabric/Lakehouse/Warehouse)]

DP --> BI[BI / Reporting]

DP --> AI[AI workloads (RAG / agents / notebooks)]

AI --> OUT[Teams / Copilot extensions / Tickets]

This pattern has three advantages in the current climate:

- Policy defensibility: you can point to supported interfaces and data minimization.

- Security trimming: you control access at the data platform layer with clear roles and logging.

- Stability: agents query curated datasets, not production SAP APIs at unpredictable scale.

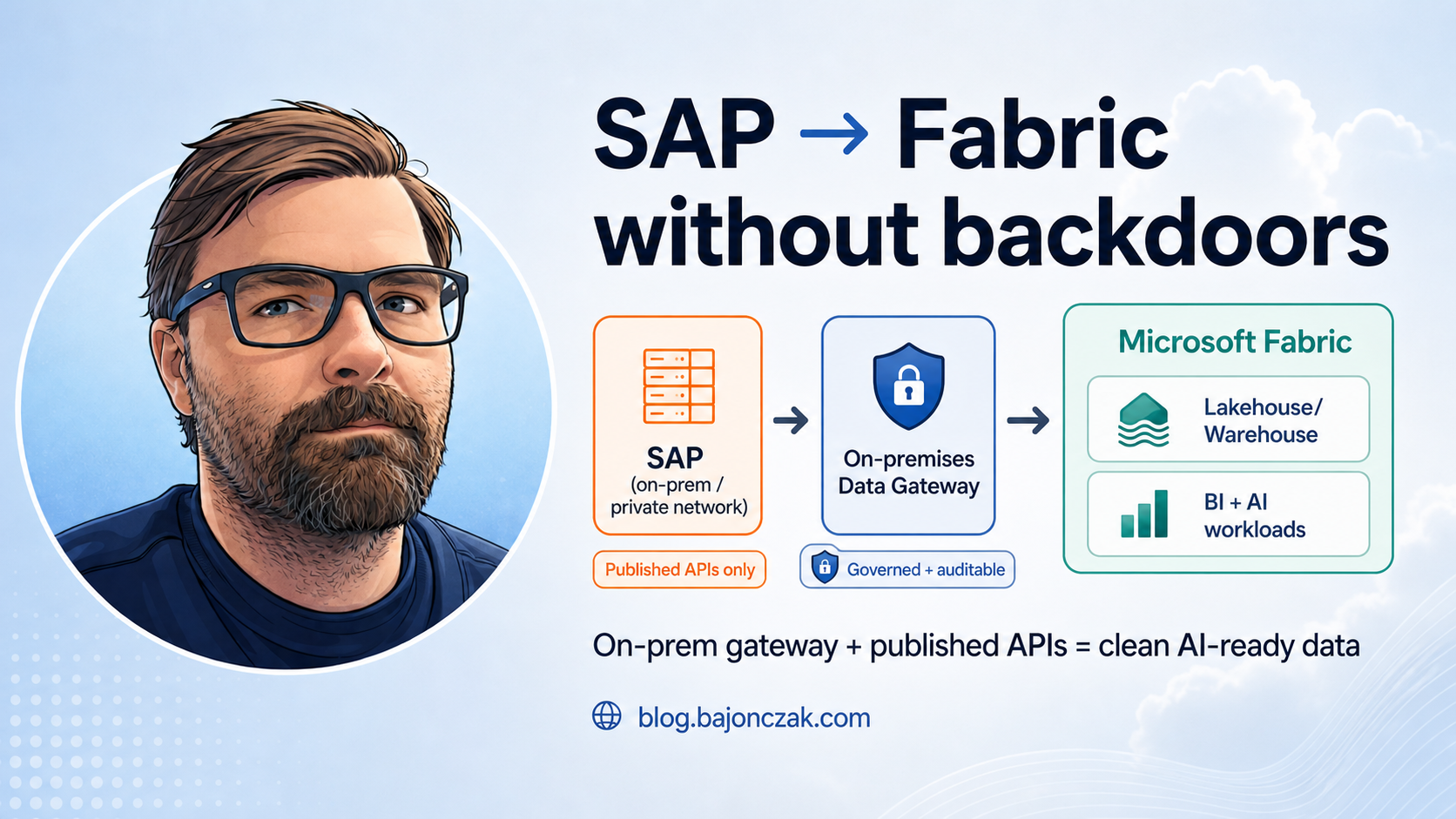

If your SAP systems are on-prem or in private networks, Microsoft Fabric (for example) supports connecting to on‑prem sources via the on‑premises data gateway in its Data Factory experience. That lets you keep SAP private while still enabling a governed pipeline to the cloud.

How to keep AI innovation alive without violating boundaries

The goal is not to slow down innovation. The goal is to move innovation to the right layer.

Instead of “agent talks to SAP”, aim for:

- Agents talk to tools. (MCP/Foundry style tool contracts.)

- Tools talk to curated datasets or approved APIs.

- All sensitive logic sits in backends you control. Not in prompts.

This is also how you stay model-agnostic: you can swap the LLM, while your tool contracts, guardrails, and datasets remain stable.

Practical checklist: what to clarify this month

If you want to respond proactively rather than emotionally, here’s what I would clarify within the next 2–4 weeks:

- Inventory: which integrations currently touch SAP via undocumented/private interfaces?

- AI scope: which AI use cases do we actually want (analytics, RAG, agents that act)?

- Published APIs: for each use case, which published interfaces exist (and which don’t)?

- Data products: what is the smallest curated dataset that still creates value?

- Ownership: who owns the dataset, the pipeline, and the downstream agent?

- Access model: how do roles map to what people/agents can see?

- Logging & review: how will you detect “this agent is pulling too much”?

None of this is glamorous, but it’s exactly what turns “AI ambition” into “AI you can run in production”.

My take

It’s tempting to treat SAP’s API policy shift as a pure vendor power move. Maybe it is, maybe it isn’t. As a customer, that framing doesn’t help you.

What helps you is a durable architecture that survives policy changes:

- published interfaces where possible,

- curated data products instead of bulk dumps,

- agents operating on governed datasets and tool contracts,

- and clear ownership + observability.

If you build that, you can keep innovating with AI — even if SAP, Microsoft, and everyone else keeps adjusting the rules of the game.

And you’ll have a much stronger position in any discussion with SAP or partners, because you’re not asking for a loophole. You’re building a defensible integration story.