Enterprise Agent Governance on Azure in 2026: Registry, Identity, Guardrails, Observability

Enterprise AI is moving fast from “Copilot writes text” to “agents run workflows”. And that shift changes the whole governance problem.

When agents can call tools, trigger pipelines, query systems, create tickets, or change configuration, you no longer have a chat feature. You have automation with a blast radius.

In this post I’ll lay out how I think about enterprise agent governance on Azure in 2026 — in practical terms:

- how to keep an inventory of agents,

- how to bind agents to identity and least privilege,

- how to enforce guardrails in code, not in prompts,

- how to monitor and audit what agents actually do.

This is not a product comparison or an “Azure marketing” piece. It’s the architecture I’d want in place before I scale from 2 agents to 20.

The problem: agent sprawl is the new shadow IT

In the early phase, agents feel small:

- one Teams bot that answers questions,

- one incident helper that suggests next steps,

- one integration agent that pulls numbers from a BI system.

Then it grows. Different teams spin up different agents, in different tools, with different credentials. Suddenly nobody can answer simple questions like:

- How many agents do we have?

- Who owns them?

- Which systems can they touch?

- What identity do they run under?

- What did they do last week?

That’s why I treat “agent governance” as a first-class platform problem, not an afterthought.

A practical governance model: four pillars

If I had to compress agent governance into one slide, I’d use four pillars:

- Registry (inventory + ownership)

- Identity (least privilege + boundaries)

- Guardrails (policy in code + safe tools)

- Observability (logs, metrics, audits)

Everything else is a detail or an implementation choice.

Pillar 1: an agent registry that is actually useful

You don’t need a fancy product on day one. You need a single source of truth that answers the basics.

For each agent, I want an “agent fact sheet” with:

- Name + one-line purpose

- Owner (person/team) + escalation contact

- Frontends (Copilot, Teams, web UI, CLI, scheduled job)

- Capabilities (read/write systems + allowed actions)

- Identity (service principal / managed identity / delegated user)

- Data scope (which data categories it may touch)

- Autonomy level: propose-only vs. act-with-approval vs. autonomous

- Logs: where do I find tool-call logs and errors?

On Azure, I’ve seen teams implement this as:

- a YAML file in a repo (best starting point),

- a small internal web page backed by a table,

- or a “registry service” that agents register with at deployment time.

The key is not the storage. The key is that someone owns it and keeps it current.

Pillar 2: identity and least privilege (where the blast radius lives)

Every agent runs under some identity. This is where most real-world risk lives.

I see two common patterns:

- Delegated user (agent acts on behalf of the user’s permissions)

- Service identity (agent acts as a service principal / managed identity)

Delegated user fits well for “inside Microsoft 365” scenarios. Service identities are common for cross-system automation (SAP, ticketing, BI, infrastructure).

My default rules on Azure:

- Prefer managed identities over long-lived client secrets.

- Scope permissions aggressively (RBAC roles, resource groups, specific APIs).

- Avoid “god-mode” identities that can read everything and write everywhere.

- Separate identities by environment (dev/test/prod) and by risk level.

When someone asks “can we let the agent do X?”, the first question I ask is: what identity would it run under, and what’s the blast radius if it’s misused?

Pillar 3: guardrails belong in code, not in prompts

Prompting is not policy.

If your safety relies on a paragraph that says “Please do not leak secrets”, you will eventually lose.

In practice, my approach is:

- Expose small, well-named tools with strict schemas (MCP style works well for this).

- Validate inputs server-side (types, ranges, allow-lists).

- Apply access control before the model sees any data.

- Implement topic guards for sensitive categories where needed (comp, layoffs, investigations, security incident details).

Also: never give agents a generic “call_any_http(url)” or “run_sql(query)” tool unless you’re deliberately building a security incident simulation. Those tools are basically an exfiltration API with a friendly UI.

Pillar 4: observability and auditability (the part most teams skip)

Even with perfect design, things go wrong. Models hallucinate. Tools error. People ask weird questions. Agents get used in unexpected ways.

So you need telemetry that answers:

- Which agent was invoked?

- By whom (user context) and from which frontend?

- Which tools did it call, in which order?

- What systems did those tools touch?

- What was the outcome (success/failure, duration, error class)?

On Azure, this typically means:

- centralized logging (e.g. Log Analytics / Application Insights),

- metrics and dashboards per agent/tool,

- alerts for unusual patterns (spike in tool calls, repeated failures, access denials).

Auditors don’t care that an LLM is “smart”. They care that you can explain what happened.

How I’d operationalize this (lightweight, not bureaucracy)

Governance doesn’t have to mean slowing everything down.

A practical operating model I like:

- Monthly: review agent registry changes (new agents/tools/permissions).

- Monthly: review tool-call metrics and error patterns for high-risk agents.

- Quarterly: re-validate RBAC scopes and remove unused capabilities.

- Always: any expansion of “write” capabilities requires owner + security sign-off.

This is basically the same rhythm mature teams already use for CI/CD and cloud permissions — just applied to agents.

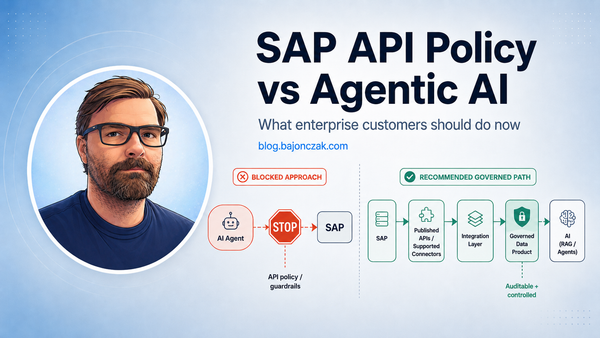

A quick industry signal: why platform boundaries are tightening

You can see the whole market moving toward tighter boundaries around agentic AI. A recent example is SAP tightening guidance and policy around third-party AI agents accessing SAP APIs directly.

Regardless of what you think about the politics, the architectural lesson is clear:

Platforms are becoming less tolerant of uncontrolled agent behavior. If your agent strategy depends on gray-area access paths, you’re building on sand.

That’s another reason I like the Azure approach of “tools + identities + logging” — it forces you to be explicit.

My take

Agent governance is not a single feature you can switch on. It’s a platform mindset.

If you want agents in production, you need:

- a registry you can trust,

- identities with clear blast radii,

- guardrails enforced in code via small tools,

- and observability you can audit.

Do that, and you can scale agents safely on Azure — even as models, vendors, and policies keep changing around you.