Building an MCP Server in TypeScript: Reacting to Signals, Querying BI, and Handing Off to a Second Agent

Most “AI agent” demos are still chat-first: you ask, the model answers.

That’s useful, but it’s not where enterprise value usually comes from.

Enterprise value shows up when an agent can react to signals (incidents, drops in revenue, failed jobs) and then orchestrate a sequence of well-scoped actions across systems — with clear logging and guardrails.

This is exactly the kind of workflow MCP (Model Context Protocol) is good at: instead of dumping a model into your infrastructure, you expose a small set of tools (with stable schemas) and let the agent call them.

In this post I’ll build a practical, slightly nerdy example in TypeScript:

- We set up an MCP server that reacts to a “signal” event.

- The MCP server queries a BI system for numbers (think: Fabric Warehouse / SQL, or a Power BI dataset).

- Then a second agent processes those numbers (e.g. detects anomalies, suggests a narrative) and produces an actionable summary.

I’ll keep it realistic: clear boundaries, small tools, and no “run arbitrary SQL” footguns.

The use case: revenue drop alert → BI query → anomaly summary

Imagine you have a daily revenue KPI dashboard in your BI platform. One morning, a monitoring rule fires:

- “Revenue today is down > 12% vs. the 7-day average.”

You want an automated response that:

- pulls the relevant numbers (today vs baseline, by region/product/channel),

- detects what changed (where the drop is concentrated),

- and posts a short summary into a Teams/Slack channel (or opens a ticket).

Crucially: you do not want an agent that can query your entire data warehouse freely. You want a small tool that returns a safe, predefined set of metrics.

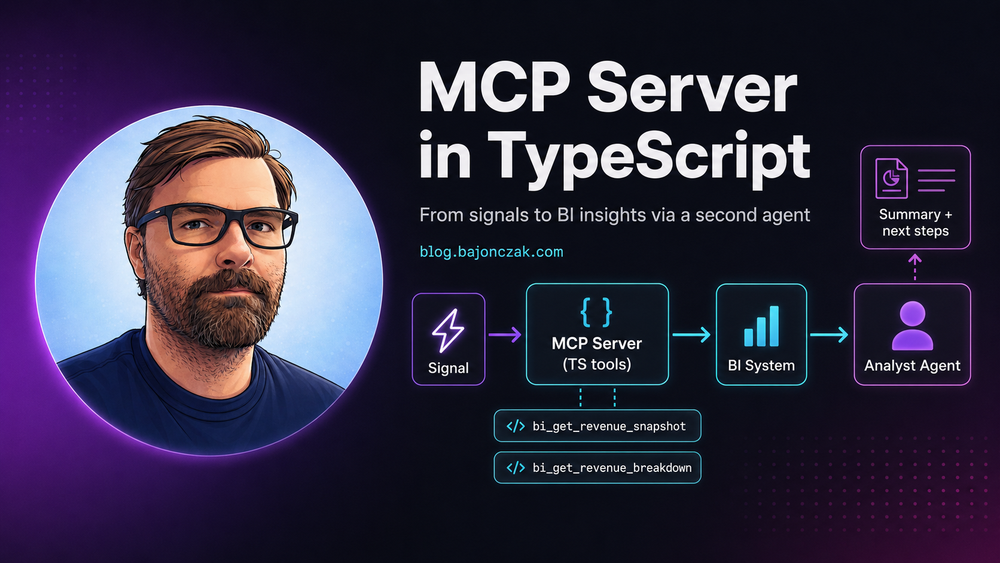

Architecture overview (two-agent pipeline)

flowchart LR

SIG[Signal Event: revenue_drop] --> A1[Agent 1: Orchestrator]

A1 --> MCP[MCP Server (Tools)]

MCP --> BI[BI System (Fabric Warehouse / SQL / Dataset)]

A1 --> A2[Agent 2: Analyst]

A2 --> OUT[Summary + Next Steps]

The roles:

- Agent 1 (Orchestrator): receives the event and decides which tools to call.

- MCP server: exposes tools like

bi_get_revenue_snapshotandbi_get_breakdown. - Agent 2 (Analyst): takes the numbers and produces a narrative + next actions.

You can host both agents in the same runtime, but I like the separation because it forces clean interfaces: the Analyst agent never talks to the BI system directly — it only sees structured numbers.

Step 1: define the data contracts

First, define the data structures you want to pass around. Keep them boring and explicit.

export type RevenueSignalEvent = {

type: 'revenue_drop';

tenantId: string;

occurredAt: string; // ISO

thresholdPct: number; // e.g. 12

metric: 'revenue';

currency: 'EUR' | 'USD';

scope: {

date: string; // YYYY-MM-DD

compareDays: number; // e.g. 7

};

};

export type RevenueSnapshot = {

date: string;

currency: string;

today: number;

baselineAvg: number;

deltaAbs: number;

deltaPct: number;

};

export type BreakdownRow = {

key: string; // e.g. region name, product group

today: number;

baselineAvg: number;

deltaPct: number;

};

export type RevenueBreakdown = {

dimension: 'region' | 'channel' | 'productGroup';

rows: BreakdownRow[];

};

Notice what’s missing: raw SQL, raw tables, any ability to query “whatever”. This is deliberate.

Step 2: implement the MCP server (TypeScript)

There are multiple MCP SDKs and runtimes out there. The pattern is always the same:

- Define tools with a name, description, JSON schema for inputs, and a handler.

- Return structured JSON results.

The following is an intentionally compact “shape” of an MCP server in TypeScript. Treat it as a template: wire it to your MCP runtime of choice.

import { z } from 'zod';

// Pretend types for your MCP framework runtime.

// Replace with the actual MCP SDK you use.

import {

createMcpServer,

ToolContext,

} from './mcpRuntime';

import { RevenueSnapshot, RevenueBreakdown } from './contracts';

import { biClient } from './biClient';

const RevenueSnapshotInput = z.object({

date: z.string().regex(/^\d{4}-\d{2}-\d{2}$/),

compareDays: z.number().int().min(1).max(30).default(7),

currency: z.string().default('EUR'),

});

const RevenueBreakdownInput = z.object({

date: z.string().regex(/^\d{4}-\d{2}-\d{2}$/),

compareDays: z.number().int().min(1).max(30).default(7),

dimension: z.enum(['region', 'channel', 'productGroup']),

topN: z.number().int().min(3).max(50).default(10),

currency: z.string().default('EUR'),

});

export function buildServer() {

const server = createMcpServer({

name: 'bi-insights-mcp',

version: '1.0.0',

});

server.tool({

name: 'bi_get_revenue_snapshot',

description: 'Returns today vs baseline revenue snapshot for a given date.',

inputSchema: RevenueSnapshotInput,

async handler(input: z.infer<typeof RevenueSnapshotInput>, ctx: ToolContext) {

// Optional: enforce tenant scoping and auth.

// ctx.user / ctx.tenant / ctx.roles

const snap: RevenueSnapshot = await biClient.getRevenueSnapshot({

date: input.date,

compareDays: input.compareDays,

currency: input.currency,

});

return snap;

},

});

server.tool({

name: 'bi_get_revenue_breakdown',

description: 'Returns revenue breakdown by dimension (region/channel/productGroup) for a given date.',

inputSchema: RevenueBreakdownInput,

async handler(input: z.infer<typeof RevenueBreakdownInput>, ctx: ToolContext) {

const breakdown: RevenueBreakdown = await biClient.getRevenueBreakdown({

date: input.date,

compareDays: input.compareDays,

dimension: input.dimension,

topN: input.topN,

currency: input.currency,

});

return breakdown;

},

});

return server;

}

// Typical main

if (require.main === module) {

const server = buildServer();

server.listen({

transport: 'stdio', // or http, depending on your setup

});

}

Step 3: implement a BI client (Fabric Warehouse / SQL example)

For the BI system, you have options:

- Fabric Warehouse via SQL endpoint,

- Lakehouse tables via SQL endpoint,

- Power BI dataset queries (DAX) via REST,

- or a custom API in front of your BI layer.

I’ll show a “Warehouse SQL” style, because it’s easy to reason about and common in Fabric.

Important: do not expose a generic query(sql) tool. Keep queries hard-coded in the backend and only allow safe parameters (date, topN, dimension).

// biClient.ts

import sql from 'mssql';

import { RevenueSnapshot, RevenueBreakdown } from './contracts';

const pool = new sql.ConnectionPool({

server: process.env.FABRIC_SQL_HOST!,

database: process.env.FABRIC_SQL_DB!,

user: process.env.FABRIC_SQL_USER!,

password: process.env.FABRIC_SQL_PASSWORD!,

options: { encrypt: true },

});

async function getPool() {

if (!pool.connected) await pool.connect();

return pool;

}

export const biClient = {

async getRevenueSnapshot(opts: {

date: string;

compareDays: number;

currency: string;

}): Promise<RevenueSnapshot> {

const p = await getPool();

// Example schema: fact_revenue(date, amount, currency, region, channel, productGroup)

// Baseline: average over prior N days.

const req = p.request();

req.input('date', sql.Date, opts.date);

req.input('compareDays', sql.Int, opts.compareDays);

req.input('currency', sql.VarChar(3), opts.currency);

const result = await req.query(`

WITH baseline AS (

SELECT AVG(amount) AS baselineAvg

FROM fact_revenue

WHERE currency = @currency

AND [date] >= DATEADD(day, -@compareDays, @date)

AND [date] < @date

),

today AS (

SELECT SUM(amount) AS today

FROM fact_revenue

WHERE currency = @currency

AND [date] = @date

)

SELECT

@date AS [date],

@currency AS currency,

t.today AS today,

b.baselineAvg AS baselineAvg,

(t.today - b.baselineAvg) AS deltaAbs,

CASE WHEN b.baselineAvg = 0 THEN NULL ELSE ((t.today - b.baselineAvg) / b.baselineAvg) * 100 END AS deltaPct

FROM today t

CROSS JOIN baseline b;

`);

const row = result.recordset[0];

return {

date: row.date,

currency: row.currency,

today: Number(row.today ?? 0),

baselineAvg: Number(row.baselineAvg ?? 0),

deltaAbs: Number(row.deltaAbs ?? 0),

deltaPct: row.deltaPct === null ? 0 : Number(row.deltaPct),

};

},

async getRevenueBreakdown(opts: {

date: string;

compareDays: number;

dimension: 'region' | 'channel' | 'productGroup';

topN: number;

currency: string;

}): Promise<RevenueBreakdown> {

const p = await getPool();

// Dimension is a controlled enum, so it’s safe to interpolate

// (never do this with arbitrary user input).

const dim = opts.dimension;

const req = p.request();

req.input('date', sql.Date, opts.date);

req.input('compareDays', sql.Int, opts.compareDays);

req.input('currency', sql.VarChar(3), opts.currency);

req.input('topN', sql.Int, opts.topN);

const result = await req.query(`

WITH baseline AS (

SELECT ${dim} AS [key], AVG(amount) AS baselineAvg

FROM fact_revenue

WHERE currency = @currency

AND [date] >= DATEADD(day, -@compareDays, @date)

AND [date] < @date

GROUP BY ${dim}

),

today AS (

SELECT ${dim} AS [key], SUM(amount) AS today

FROM fact_revenue

WHERE currency = @currency

AND [date] = @date

GROUP BY ${dim}

)

SELECT TOP (@topN)

COALESCE(t.[key], b.[key]) AS [key],

COALESCE(t.today, 0) AS today,

COALESCE(b.baselineAvg, 0) AS baselineAvg,

CASE WHEN COALESCE(b.baselineAvg, 0) = 0 THEN NULL

ELSE ((COALESCE(t.today, 0) - b.baselineAvg) / b.baselineAvg) * 100

END AS deltaPct

FROM today t

FULL OUTER JOIN baseline b ON t.[key] = b.[key]

ORDER BY ABS(COALESCE(((COALESCE(t.today, 0) - b.baselineAvg) / NULLIF(b.baselineAvg, 0)) * 100, 0)) DESC;

`);

return {

dimension: opts.dimension,

rows: result.recordset.map(r => ({

key: String(r.key),

today: Number(r.today ?? 0),

baselineAvg: Number(r.baselineAvg ?? 0),

deltaPct: r.deltaPct === null ? 0 : Number(r.deltaPct),

})),

};

},

};

Step 4: Agent 1 – Orchestrator that reacts to an event

Now we build an orchestrator that receives a signal event (webhook, message bus, scheduled job) and uses the MCP tools.

This example uses an HTTP webhook, but you can swap it with Azure Event Grid, Service Bus, whatever you like.

// orchestrator.ts

import express from 'express';

import { RevenueSignalEvent } from './contracts';

import { callMcpTool } from './mcpClient';

import { runAnalystAgent } from './secondAgent';

const app = express();

app.use(express.json());

app.post('/signals', async (req, res) => {

const event = req.body as RevenueSignalEvent;

if (event.type !== 'revenue_drop') {

return res.status(400).json({ error: 'unsupported_event' });

}

const { date, compareDays } = event.scope;

// 1) Pull snapshot

const snapshot = await callMcpTool('bi_get_revenue_snapshot', {

date,

compareDays,

currency: event.currency,

});

// 2) Pull breakdowns

const byRegion = await callMcpTool('bi_get_revenue_breakdown', {

date,

compareDays,

dimension: 'region',

topN: 10,

currency: event.currency,

});

const byChannel = await callMcpTool('bi_get_revenue_breakdown', {

date,

compareDays,

dimension: 'channel',

topN: 10,

currency: event.currency,

});

// 3) Hand off to Analyst Agent

const analysis = await runAnalystAgent({

event,

snapshot,

breakdowns: [byRegion, byChannel],

});

// 4) Return / post somewhere

// (Teams/Slack/Jira integration would go here.)

return res.json({ ok: true, analysis });

});

app.listen(3000, () => {

console.log('Orchestrator listening on :3000');

});

Step 5: Agent 2 – Analyst that turns numbers into narrative + next actions

The second agent is where you can use an LLM safely because it never sees raw warehouse access. It only gets:

- the event context,

- a snapshot,

- a few breakdown tables.

That’s enough to produce a human‑friendly summary without giving the model a free-form data exfiltration path.

// secondAgent.ts

import { RevenueSignalEvent, RevenueSnapshot, RevenueBreakdown } from './contracts';

import { llm } from './llmClient';

export async function runAnalystAgent(input: {

event: RevenueSignalEvent;

snapshot: RevenueSnapshot;

breakdowns: RevenueBreakdown[];

}): Promise<{ summary: string; nextSteps: string[] }> {

const prompt = `

You are an analyst agent.

Goal: explain a revenue drop in a short, actionable way.

Rules:

- Use only the numbers provided.

- Do not invent causes you cannot support.

- Provide a short summary + a small list of next steps.

Event:

${JSON.stringify(input.event, null, 2)}

Snapshot:

${JSON.stringify(input.snapshot, null, 2)}

Breakdowns:

${JSON.stringify(input.breakdowns, null, 2)}

`;

const response = await llm.generateJson({

schema: {

type: 'object',

properties: {

summary: { type: 'string' },

nextSteps: { type: 'array', items: { type: 'string' } },

},

required: ['summary', 'nextSteps'],

},

prompt,

});

return response;

}

A good output here looks like:

- “Revenue is down 14% vs. 7-day baseline. The largest negative deltas are in Region X and Channel Y.”

- Next steps like: check order intake, verify pricing feed, confirm whether a campaign ended, etc.

The agent is allowed to suggest checks, but not to claim root causes without evidence.

Security notes (what makes this enterprise-friendly)

There are three choices in this design that matter a lot in real environments:

- No raw query tools. The MCP server exposes safe metrics tools with strict schemas.

- Least privilege. The BI credentials used by the MCP server are read-only and scoped to the specific dataset.

- Separated responsibilities. The Analyst agent never touches BI directly; it only sees the curated numbers.

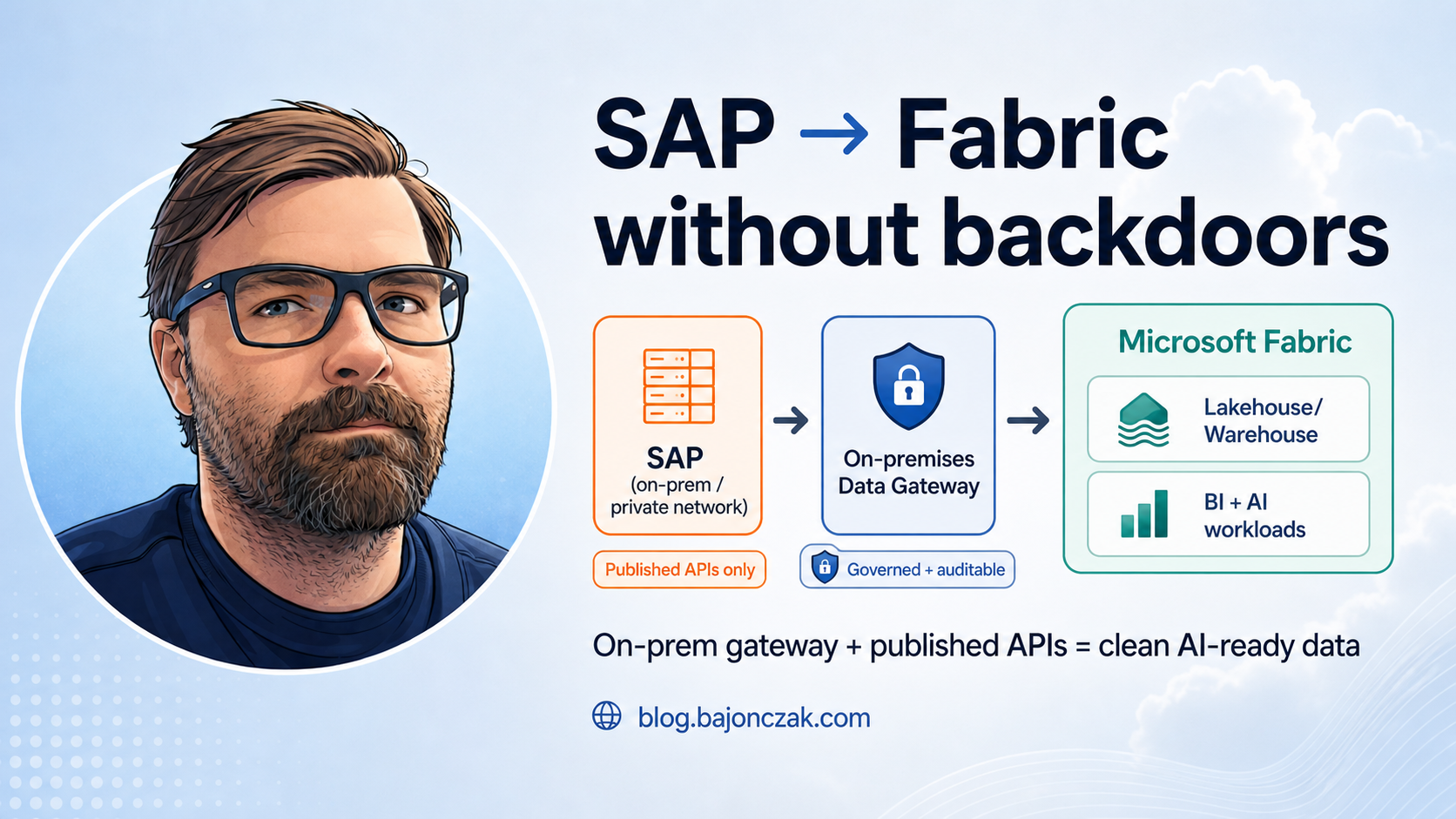

This is the same pattern I use in SAP/Entra scenarios: use MCP tools as a narrow “capability surface”, then let the model operate only on what you deliberately provide.

My take

Setting up an MCP server isn’t about novelty. It’s about discipline: tools with schemas, backends with guardrails, agents as orchestrators — not as free-form scripts with a personality.

If you build your first MCP server like this (small tools, safe contracts), you get two benefits immediately:

- You can plug different agent frontends into it over time (Copilot, Teams, web UI).

- You can add more “analyst agents” without increasing your blast radius, because the data surface stays controlled.

And that’s the real point: not “AI magic”, but an architecture that makes automation and reasoning more useful without making security and auditing impossible.